AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

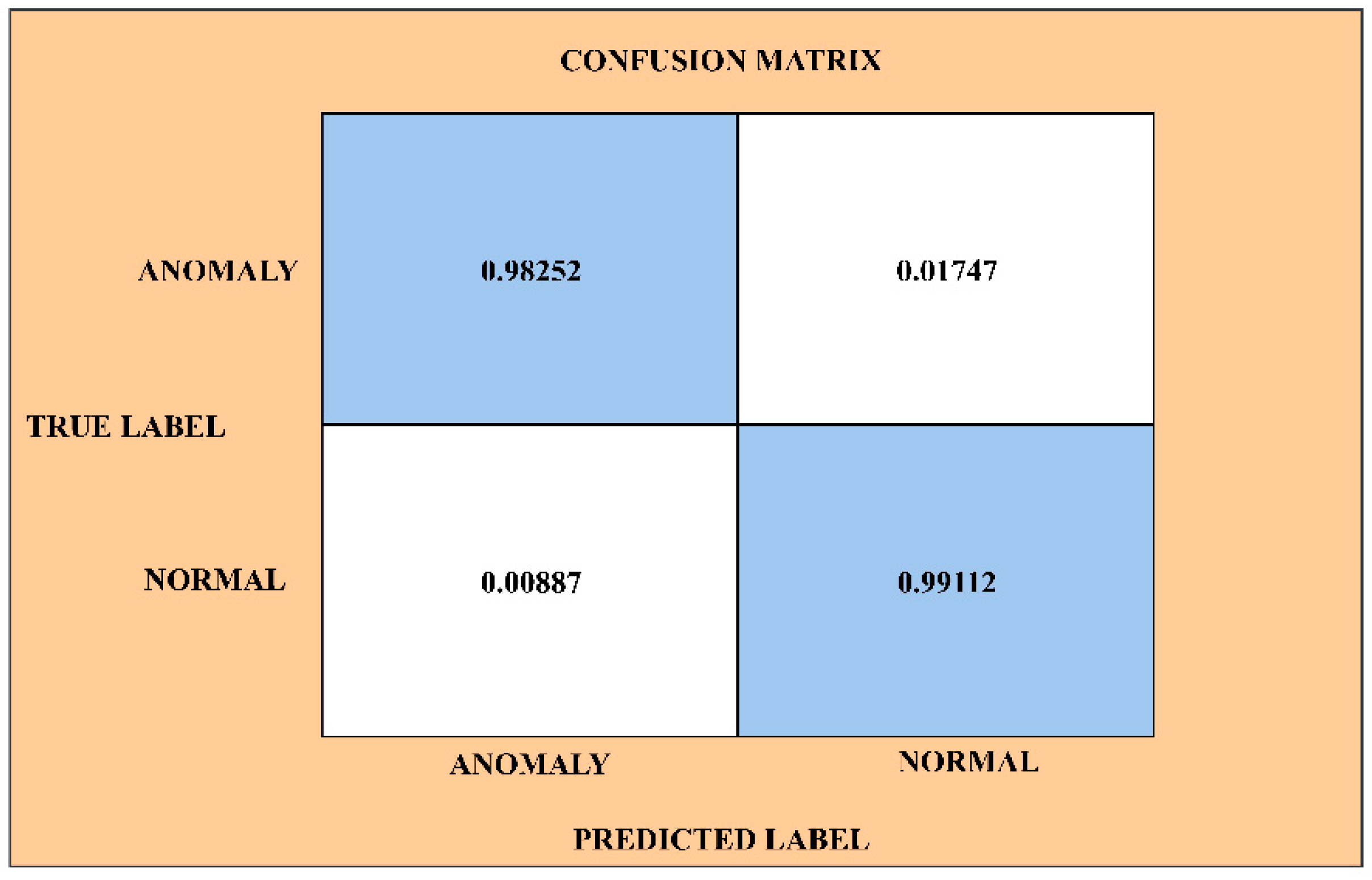

Caret confusion matrix1/10/2024 The confusion matrix was then created by using the following command: cm <- confusionMatrix(data= dt_pred ,test, positive = "1") The model was trained – in this case using a decision tree – with the caret package. Responding to feedback from the training there was a task, for D&D’s data science team, to create an example confusion matrix plot – in R – to allow our candidates to use the outputs of their models and to put these outputs to best use. The following code assumes you have already fit a confusion matrix using the caret package. The candidates on the recent ML training performed data import, data cleaning, feature engineering, training a model and creating the confusion matrix. The output of this package is useful for quick assessment of the model accuracy and fit – however it is not the most aesthetically pleasing to the eye. We have created the visual as a function to complement the existing confusion matrix options, contained in the caret package. The aim here is to try to reduce the complexity and the number of metrics you need to look at to assess a classification method. There are a few other metrics to assess the confusion matrix, but the ones outlined are the most useful in our experience. The calculation for this metric is FP/Actual Negative (i.e. Specificity (False Positive Rate) – when we predict the patient won’t be readmitted, how often is this prediction right.The calculation for this metric is TP/Actual Positive (i.e. Sensitivity (True Positive Rate) – when we actually predict readmission, how often are we right at that predictions.The calculation for this metric is FP+FN/Total predictions Error (Misclassification) Rate – overall, how inaccurate the readmission predictions were.The calculation for this metric is TP+TN/Total predictions Accuracy – this shows how accurate overall the model is and is used as a benchmark to compare against other classification algorithms / models.To assess the confusion matrix there are a few very useful measures: This shows that training models and improving accuracy is essential and necessary in making the most accurate predictions possible. readmitted vs not readmitted) forms a 2 x 2 matrix as shown hereunder: The confusion matrix (in a binary classification problem i.e. Throughout the training we used an example widely published by Google of how to understand the components of the table – the main point was to understand how to interpret the information pertaining to the example case we covered in the training, in terms of using hospital information to understand if a patient would be readmitted. Put quite simply – a confusion matrix is essentially a table used to assess prediction accuracy. At D&D we act on feedback from our customers to create custom and standalone solutions.

There is a standard visualisation built into the caret package but this was hard to interpret. One of the users asked if there was a default way in R (the language that we covered in the training) of visualising a correlation plot. If you are interested in attending one of these courses, and have limited exposure to ML techniques, then this course is for you. The cohorts really enjoyed the section on ML classification methods, explicitly focusing on supervised ML techniques for classification. At two recent – successful and thoroughly engaging – ML training sessions.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed